AI Governance: Control the Data That Powers Your AI

Every LLM your organization deploys is only as safe as the data behind it. BigID's AI governance platform gives you the discovery, classification, and policy controls to ensure only the right data enters your AI pipelines. Take a self-guided tour to see how BigID helps security, compliance, and data teams:

- Discover and classify sensitive data in AI training datasets before models go live

- Govern unstructured data inputs to LLMs and generative AI tools

- Detect and remediate shadow AI data exposure across the enterprise

- Enforce data-use policies that align with the EU AI Act and NIST AI RMF

- Build a continuous AI governance program — not a one-time audit

Recognized as the #1 data security, compliance, and AI data governance solution

What is AI Governance?

AI governance is the set of policies, processes, and technical controls that organizations use to ensure AI systems are built and operated responsibly — with appropriate oversight of the data, models, and outputs involved.

As enterprises deploy LLMs and generative AI tools at scale, AI governance frameworks must address three core risk areas:

- Data risk: Sensitive or regulated data entering AI training sets without proper classification or consent

- Output risk: Unauthorized disclosure of confidential information through AI-generated responses

- Compliance risk: Non-compliance with emerging AI regulations including the EU AI Act, NIST AI RMF, ISO 42001, and GDPR as applied to AI data processing

Effective AI governance requires both technical controls — like automated data discovery and classification — and organizational policies governing who can use AI systems and what data they can access.

The AI Data Risk Gap Is Widening

High-profile incidents have made clear the stakes of ungoverned AI data. In 2023, Samsung engineers inadvertently leaked proprietary source code by inputting it into ChatGPT — one of dozens of enterprise AI data incidents that year. Microsoft Copilot deployments have exposed internal communications in organizations without proper access controls in place.

Based on BigID's AI Risk & Readiness Report, which draws on insights from hundreds of security, compliance, and data leaders, the key findings are clear:

- 72% of enterprises have deployed generative AI tools without a formal governance policy

- 61% of security leaders say they don't have full visibility into what data their AI models are training on

- Regulatory penalties for AI-related data violations are expected to begin enforcement in the EU in 2025

AI is moving faster than governance. BigID closes the gap.

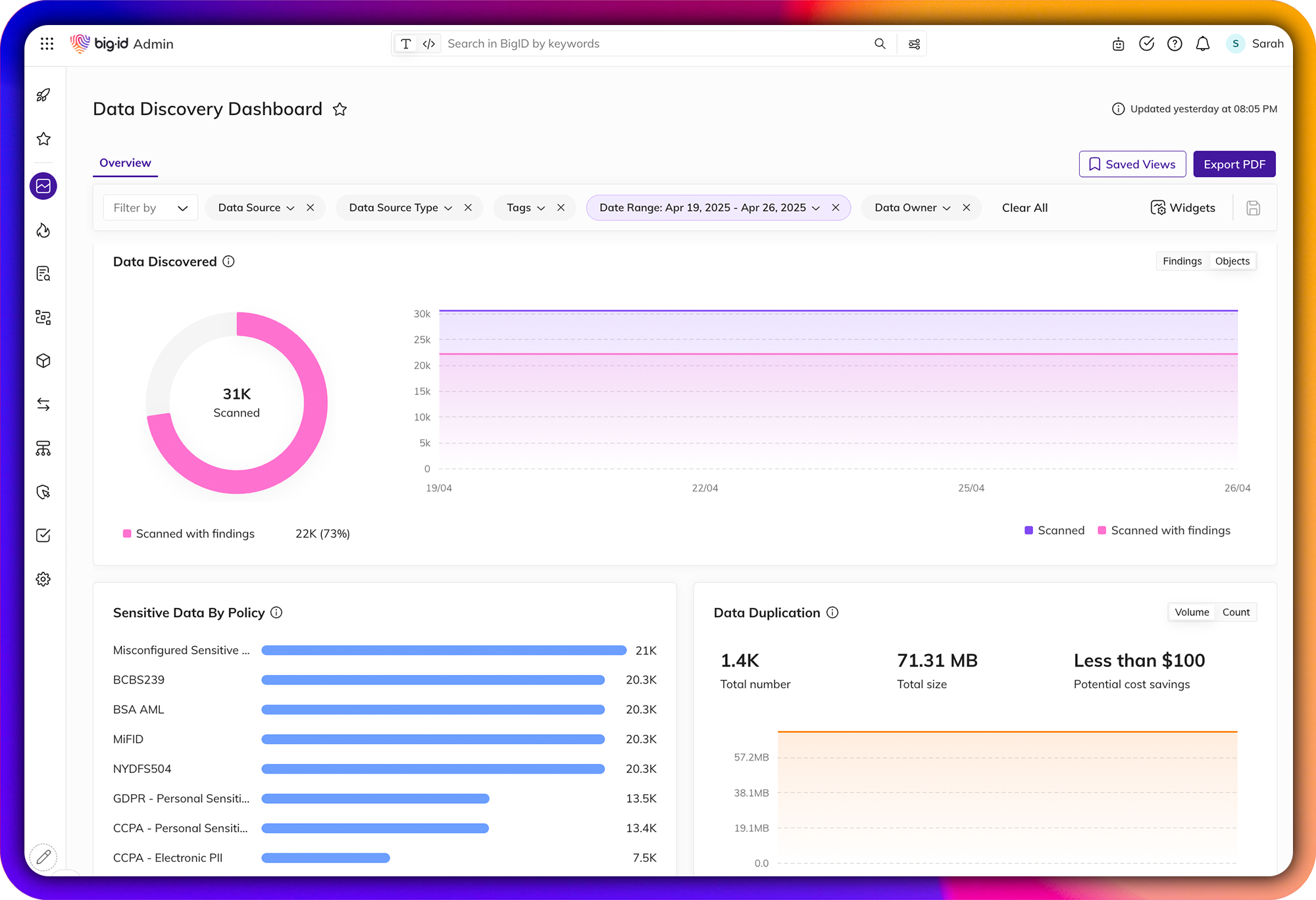

Discover & Classify AI Training Data

Know what data is feeding your models — before you deploy.

BigID automatically discovers and classifies all data across your environment, including unstructured data, cloud data, SaaS platforms, and on-prem repositories, to give you a complete picture of what's entering your AI training pipelines. Identify PII, IP, financial records, credentials, and other sensitive data before it becomes a model liability.

-1.webp?width=2000&height=1368&name=data-overview%20(1)-1.webp)

Govern Generative AI Inputs & Outputs

Control what data enters your LLMs and what information they can surface.

BigID lets you define and enforce rules for what data can be used in AI inputs — preventing sensitive records from entering model training sets or prompt contexts. Monitor AI data flows in real time and alert security teams when high-risk data is detected in AI pipelines, before it becomes a model liability.

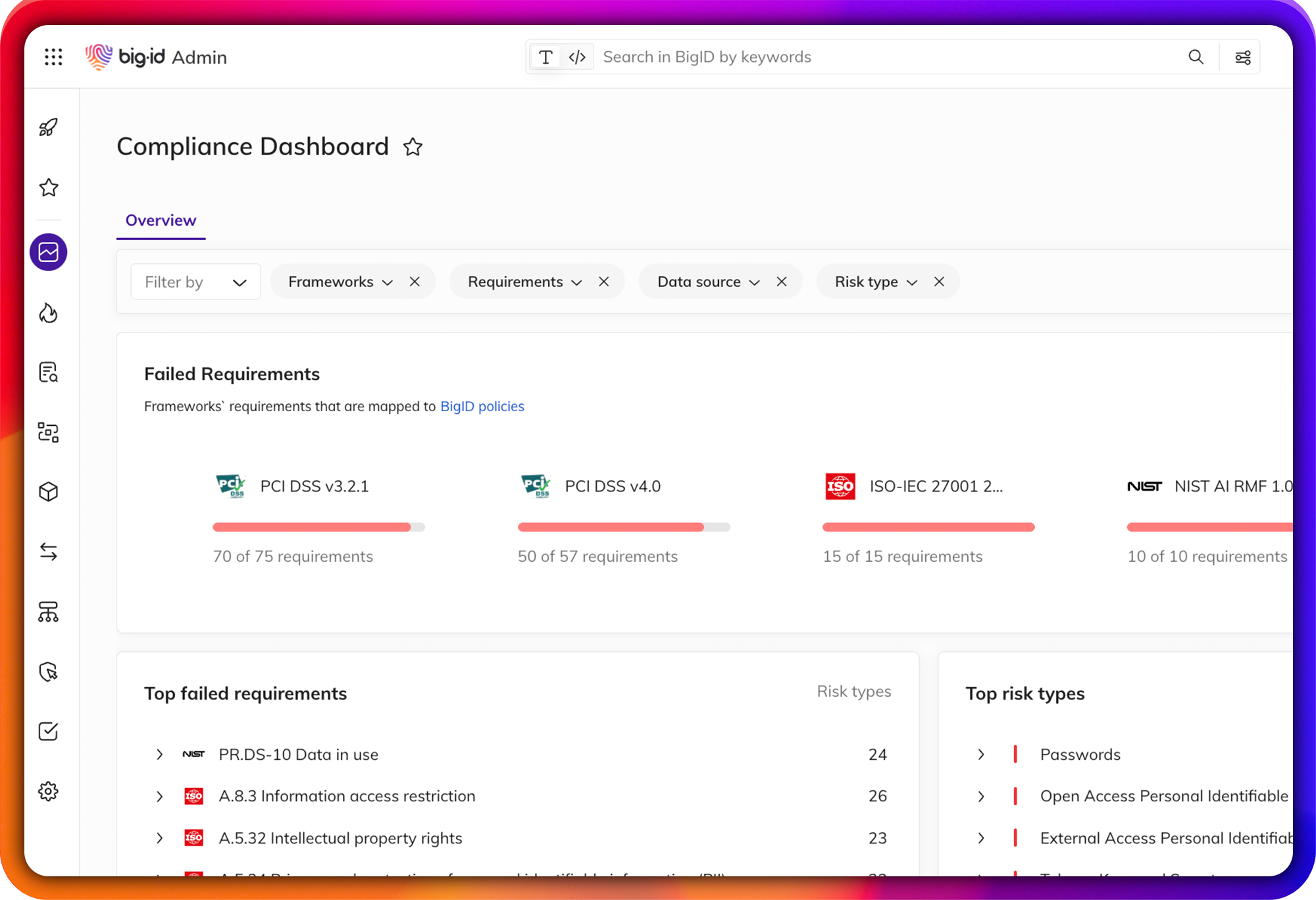

Stay Compliant with AI Regulations

Meet EU AI Act, NIST AI RMF, ISO 42001, and GDPR requirements for AI data.

AI regulation is accelerating globally. BigID's AI governance capabilities align with:

- EU AI Act: Data governance requirements for high-risk AI systems, including data quality, documentation, and transparency obligations

- NIST AI RMF: The Govern function — establishing policies, accountability, and risk management processes for AI

- ISO 42001: AI management system standards for responsible AI development and deployment

- GDPR / CPRA: Lawfulness of processing requirements as applied to AI training data containing personal information

-1.webp?width=2000&height=1368&name=data-overview%20(1)-1.webp)

Detect Shadow AI & Unauthorized Data Use

Find the AI tools your employees are already using — and the data they're exposing.

Shadow AI, employees using unsanctioned AI tools with corporate data, is one of the fastest-growing enterprise data risks. BigID helps organizations identify unauthorized AI usage patterns, discover what data is being exposed through shadow AI tools, and enforce governance policies before incidents occur.

FAQ:

What is AI governance?

AI governance is the framework of policies, processes, and technical controls organizations use to ensure AI systems are developed and operated responsibly — with appropriate oversight of the data inputs, model behaviors, and outputs involved. It encompasses data quality controls, access management, compliance with AI-specific regulations (EU AI Act, NIST AI RMF), and ongoing monitoring of AI systems for bias, misuse, and data exposure risk.

Why do organizations need AI governance?

Without AI governance, organizations risk exposing sensitive customer data through LLM training sets, generating non-compliant AI outputs, enabling shadow AI usage that bypasses security controls, and violating emerging AI regulations like the EU AI Act. A formal AI governance program reduces these risks while enabling faster, more confident AI adoption.

How does BigID help with AI governance?

BigID provides the data discovery, classification, and policy enforcement capabilities that form the technical foundation of an AI governance program. Organizations use BigID to find and classify sensitive data before it enters AI models, enforce data-use policies across AI pipelines, detect shadow AI activity, and generate compliance documentation for the EU AI Act and NIST AI RMF.

What is the EU AI Act and how does it affect data governance?

The EU AI Act is landmark regulation that classifies AI systems by risk level and imposes data governance obligations on organizations deploying high-risk AI — including requirements for data quality, documentation of training data sources, transparency to affected individuals, and ongoing human oversight. Organizations must be able to demonstrate what data trained their models and whether it meets quality and compliance standards.

Talk to a BigID AI Governance specialist today

See how BigID can help organizations like yours extend data governance and security to modern conversational AIs and LLMs.